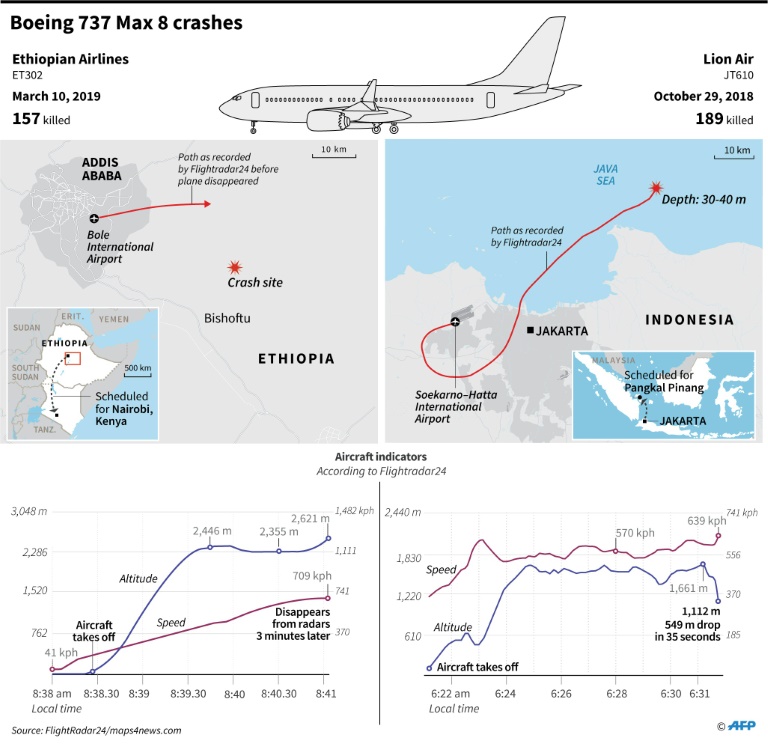

In October, a Lion Air Boeing 737 Max 8 crashed shortly after take-off from Jakarta. Four and a half months later, an Ethiopian Airlines 737 Max 8 crashed shortly after take-off from Addis Ababa. A total of 346 passengers and crew died in the two disasters, causing the Boeing Max 8 fleet to be grounded.

In a statement, the manufacturer confirmed that it is updating a software routine known as the Maneuvering Characteristics Augmentation System (MCAS). This system prevents the Max 8 from stalling and was introduced to counter the 737’s modification-induced tendency to pitch-up at slow speeds. In other words, the MCAS is a safety device. Crucially, the system can be overridden if pilots suspect a malfunction.

Flying is Remarkably Safe

Tragic though these events are, it is important to remember that, thanks to the efforts of airframers, airlines, regulators, trade associations, and unions, flying is remarkably safe.

In 2017, the world’s airlines recorded the safest year, with 1.1 accidents for every 1 million flights. A year later, the accident rate was 1.35 accidents for every 1 million flights. Between 2010 and 2019, one passenger died on a U.S. airline: the 43-year-old Jennifer Riordan was killed in 2018 when an uncontained engine event fired shrapnel into the fuselage.

Flying is much safer than traveling by automobile, motorcycle, subway, train or bus. Motorbiking is over 3,000 times deadlier than flying. Traveling in a car is about 100 times more deadly while taking the train is twice as deadly.

You are safer sitting in a pressurized aluminum tube packed with kerosene and electrical equipment seven miles above the earth than you are in your own home.

Keeping Aviation Safe

Safety resources such as NASA’s Aviation Safety Reporting System (ASRS) help keep flying safe. The ASRS contains thousands of safety reports filed anonymously by aviation professionals.

Earlier this month, The Atlantic published several of those accounts on Boeing’s MCAS software. The reports that describe runaway trim problems are an interesting read. An account filed in November 2018, for example, recorded a B737MAX Captain reporting an autopilot anomaly which led to a brief nose-down situation.

Another report, also filed in November, recorded that the “aircraft pitched nose-down after engaging autopilot on departure. Autopilot was disconnected and flight continued to destination.”

V @laraseligman—#EthiopianAirlinesCrash "Both #Boeing and the #FAA were informed of the specifics of this story and were asked for responses 11 days ago, before the second crash of a 737 MAX" #B737MAX https://t.co/Lpf3WaE3Uh #AvGeek #Airliners #aviationsafety #airtravel #MCAS pic.twitter.com/uNAP6sBkjC

— Mark Collins (@Mark3Ds) March 17, 2019

Some accounts expressed concerns about training and documentation, with one report stating that some systems, such as the MCAS, are not fully described in the B737MAX Flight Manual. The alleged omissions annoyed the Captain, who wrote that it is “unconscionable that a manufacturer, the [Federal Aviation Administration (FAA)], and the airlines would have pilots flying an airplane without adequately training, or even providing available resources and sufficient documentation to understand the highly complex systems that differentiate this aircraft from prior models.”

In light of these allegations, it is reassuring to know that Boeing, in consultation with the FAA, is reviewing and updating the MCAS software and associated protocols.

Investigators Need Time

While the events and opinions recorded in NASA’s ASRS database do not establish a link between Boeing’s MCAS software and the loss of Ethiopian Airlines Flight ET302, they do inform investigators’ frame of analysis. Alternative explanations include a catastrophic mechanical failure, pilot suicide, and terrorism.

The bottom line is that investigators must be given the time and space to follow the evidence. The public’s clamor for answers is understandable, but clamor is dysfunctional because it can restrict investigators’ field of vision. Pressure distorts.

Latent Errors and Complex Systems

As with every complex socio-technical system, aviation harbors “latent errors” – system states that can, under specific conditions, produce incidents, accidents, and near-misses.

https://www.youtube.com/watch?v=M-qKWXuHQeg

In 1998, Dr. Richard Cook of the University of Chicago published a paper on complex systems and organizational accidents – upsets that originate in a system’s architecture and operating practices.

According to Cook, complex systems “are intrinsically hazardous.” They “contain changing mixtures of failures latent within them,” and “run in degraded mode … as broken systems.” Most worryingly, said Cook, “catastrophe is just around the corner.” The author opines that it is impossible to eliminate the possibility of catastrophic failure, because “the potential for such failure is always present by the system’s own nature.”

Ironically, innovations intended to make a system safer or more efficient can, by introducing poorly-understood, novel failure paths, make it less safe. Innovations that are poorly documented or inadequately trained create latent errors – accidents-in-waiting.

Striking a more optimistic note, Cook asserts that safety-minded operatives can, by identifying and repairing system weaknesses, restore safety margins: “Human operators have dual roles: as producers and as defenders against failure.” He writes that practitioners actively adapt the system “to maximize production and minimize accidents. These adaptations often occur on a moment by moment basis.”

Humans as Safety-Guardians

Like Cook, Professor Erik Hollnagel frames operatives as safety-guardians: “Humans are … a resource necessary for system flexibility and resilience [providing] flexible solutions to many potential problems.” Often, the human component is singled out for blame when systems fail. Operatives are framed as the weakest component. In extremis, operatives are demonized. Cook and Hollnagel see matters differently. For them, operatives are the last line of defense.

Whatever the cause(s) of the Flight ET302 disaster, you can be sure that investigators will objectively evaluate every plausible explanation, from software issues, inadequate training, and catastrophic mechanical failure to terrorism and pilot suicide.

Finally, it is worth noting that in 2013 aviation authorities grounded Boeing’s mold-breaking 787 Dreamliner. It is now a success.

Disclaimer: The views and opinions expressed here are those of the author and do not necessarily reflect the editorial position of The Globe Post.